AI can make compliance faster. It can review documents, highlight missing information, and give you a clearer overview across suppliers.

But AI does not walk factory floors. It does not observe working conditions. And it does not verify whether procedures are actually followed.

If you are using, or considering using, AI in compliance, here is what you should not overlook.

A document is not proof

AI can confirm that a certificate has been uploaded or that a policy exists. It cannot confirm that the certified material is being used today, or that the safety policy is followed in practice.

Many compliance failures do not happen because documents are missing. They happen because what is written does not match what is done.

Documents support compliance. They do not prove it.

The way data is collected matters

Before trusting AI insights, ask yourself a simple question: how was this information captured?

Was it uploaded later from a desktop? Or was it collected during an on-site audit? Is it time-stamped? Is it tied to a specific location and responsible person?

Data captured in real time, inside a structured audit, is fundamentally different from data uploaded afterwards. AI can only work with what it receives. If the input is weak, the output will be too.

Real-time evidence is becoming more important, not less

It is easier than ever to edit documents, reuse photos, or generate polished reports.

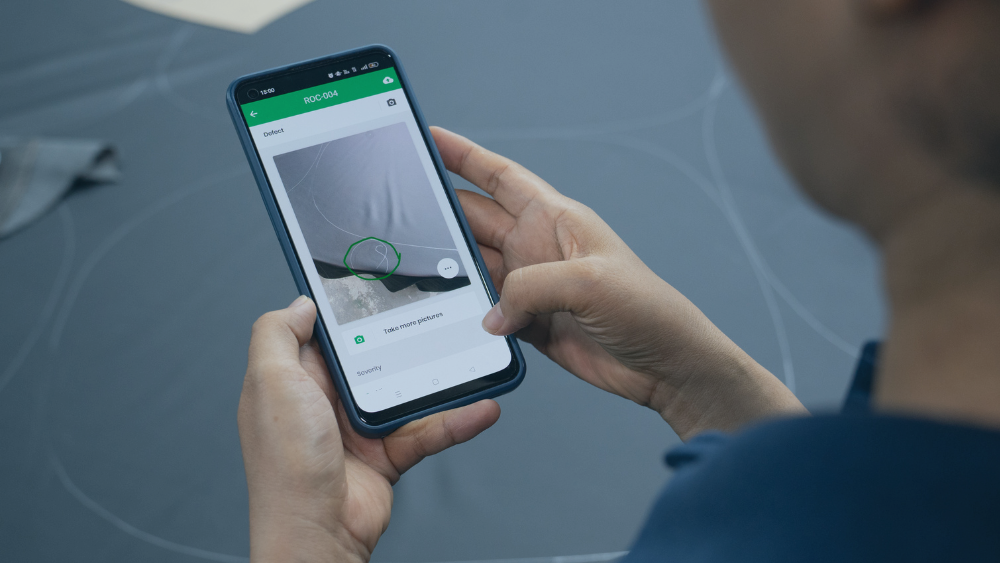

That makes real-time photo and video documentation more important. When evidence is captured on-site, during the audit itself, and linked directly to specific checkpoints, you create a clear connection between the finding and the physical reality.

If AI is later used to analyze results, it will be working with verified observations rather than curated uploads.

Human judgment still matters

AI is good at spotting patterns and flagging inconsistencies. It can tell you what looks unusual.

It cannot sense hesitation in an answer. It cannot notice behavior outside the checklist. It cannot ask follow-up questions based on what it sees in the room.

Compliance decisions often depend on context. Auditors must remain responsible for judgment. AI should support their work, not replace it.

Self-reported data has limits

Many AI-driven setups rely heavily on supplier self-assessments and uploaded documentation.

Those inputs are useful. But they are still self-reported. Suppliers may misunderstand requirements or present their operations in the best possible light.

Without structured audits and required on-site evidence, AI simply processes self-reported data more efficiently. Efficiency does not automatically mean reliability.

Can you defend it?

At the end of the day, the real test of your compliance setup is simple: could you defend it?

If questioned, can you clearly show what was checked, when it was checked, who performed the audit, and what evidence supports the conclusion?

AI-generated summaries are helpful. But the strength of your compliance system lies in the underlying audit trail and the quality of the evidence collected.

In short

Use AI to gain overview, prioritize risk, and reduce manual work.

But build your compliance on structured audits, real-time documentation, and clear accountability. Anchor your digital systems in the physical world.

AI can strengthen compliance. It cannot replace seeing what is actually there.

Want stronger control over your audits and supplier compliance?

Qarma helps you run structured digital audits, require time-stamped photo and video evidence, standardize audits and assessments across suppliers, and maintain a clear, defensible audit trail.

If you are using AI, make sure your foundation is solid.